Law firms, accountancy practices and advisory firms are under competitive pressure to adopt AI. The ones doing it well are redesigning how work actually happens — not adding tools on top of existing workflows and hoping for the best.

Professional services firms sell expertise and judgement. AI has the potential to amplify both — but only if adoption is governed carefully enough to protect client trust and professional standards.

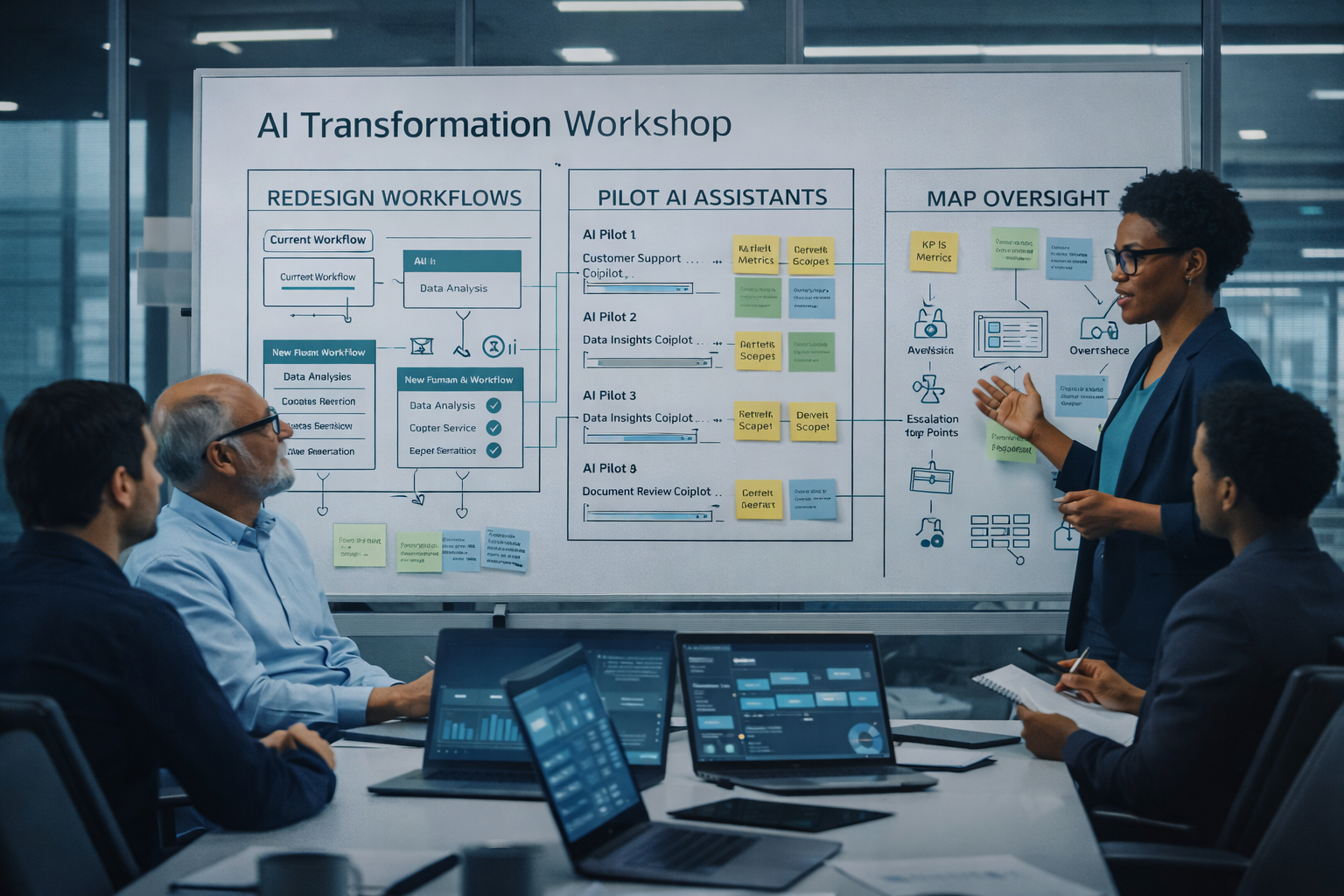

Knowledge worker productivity is the competitive advantage. AI can improve it materially, but only if workflows are redesigned around where AI genuinely helps. Adding a generative AI tool to an existing process rarely delivers what the licence cost promised. The firms seeing real impact are rethinking how work flows between people and AI — who drafts, who reviews, who signs off, and where the quality gates sit.

Client confidentiality adds a hard constraint. What happens to client data when it enters an AI model? Some firms are cautious enough to exclude client data entirely. Others are building governance frameworks that let them use it safely. Both approaches require deliberate design — not a default setting in a vendor contract.

Professional liability is the harder question. AI-assisted work needs quality controls that existing processes weren't designed for. Partners need to understand what AI did and didn't contribute to a piece of work. Clients are increasingly asking whether their advisors are using AI, and if so, how. Regulators across law, accountancy and financial advisory are paying attention.

Meanwhile, competitors are moving. Some are building genuine AI-enabled delivery models that allow them to serve clients faster and at lower cost. Others are making claims about AI integration that don't survive scrutiny. The firms that will win are the ones that redesign intelligently — capturing productivity gains without compromising the quality and judgement that clients are actually paying for.

That redesign takes leadership conviction and structured methodology. It doesn't happen by licensing a tool and hoping people use it.

Mike led enterprise-wide AI transformation at Verimatrix — a publicly listed global SaaS company — under direct ExCom oversight. Not advising on AI adoption. Executing it across a nine-country organisation with board-level accountability.

The Verimatrix programme transformed a knowledge-intensive, quality-critical engineering organisation — structurally similar to a professional services firm. The questions that arose are the same questions that come up in every professional services AI programme: where does AI help, what needs human sign-off, how do you maintain quality, and how do you govern the output?

This included designing Responsible AI governance institutionalised across a regulated EU-listed company, converting uncontrolled shadow AI into governed enterprise-wide adoption under an AI Steering Group, and aligning board, technology, legal and commercial stakeholders around accountable AI deployment.

AI creates value in professional services across distinct workflow areas. Where you start depends on your client confidentiality constraints, regulatory obligations, and which governance questions need resolving first.

Precedent search, literature review, regulatory analysis, case law research. High value, lower governance risk, and often the right starting point for firms that haven't yet resolved client data questions. AI can reduce research time significantly whilst maintaining the rigour that professional standards demand.

First drafts, contract review, report structuring, brief preparation. AI-assisted drafting can reduce time on initial document creation by 30–50%, but the workflow needs clear quality gates so partners and senior professionals maintain oversight of what goes to the client.

Firms maintain large internal knowledge libraries — previous reports, case studies, methodologies, precedent files. AI knowledge assistants let staff locate and synthesise relevant material across these repositories in minutes rather than hours, improving institutional knowledge reuse.

RFP responses, capability statements, pitch preparation, client briefings. Professional services firms produce high volumes of proposals where AI assistance can meaningfully improve speed and consistency — particularly for firms responding to competitive tenders.

When AI is embedded into delivery, the economics of the firm change. Pricing models, staffing ratios, quality review processes, and training requirements all need rethinking. This is the harder and more valuable work — the difference between firms that use AI tools and firms that have genuinely AI-enabled operating models.

Meeting summaries, client updates, presentation preparation, status reporting. AI can reduce the administrative overhead that consumes senior professional time, allowing more hours to be spent on the advisory and judgement work that clients value most.

Every firm has different practice areas, different client confidentiality requirements, and different organisational readiness. The engagement always starts with understanding yours.

Whether you're a law firm exploring AI-assisted research, an accountancy practice looking at audit workflow redesign, or an advisory firm considering how AI changes your delivery model — the approach is structured around your professional obligations, not a generic playbook.

A focused 2–4 week assessment that maps where AI can genuinely improve productivity across your practice areas, identifies which governance and confidentiality requirements must be resolved first, and produces a realistic pilot recommendation. The governance risk assessment is particularly valuable in professional services — it maps which workflows have client confidentiality or professional liability implications before any pilot commitment is made.

Most firms find the honest answer is that they're further from deployment-ready than they thought. The Radar tells them specifically why, and what to resolve — so they invest in the right areas first.

A 6–12 week pilot in a real practice area or workflow, with quality controls and confidentiality protections designed in from the start. The pilot blueprint defines governance controls, professional oversight requirements, and human sign-off points alongside the workflow design. You're testing whether the AI-assisted workflow works in practice and whether the quality governance model is credible — not whether the technology works in a sandbox.

A 3–6 month engagement to move from pilot success into operational sustainability. This means redesigning delivery workflows, defining human-AI accountability structures, building quality review cycles into normal operations, and rethinking the economics — staffing ratios, pricing models, and training. For multi-practice firms, this often means establishing a shared AI operating model with clear governance across practice areas.

A retained engagement — typically 1–3 days per month — providing senior AI oversight. Especially valuable for firms whose partnership or leadership team needs experienced AI thinking without a permanent hire. Mike can engage credibly with partners, boards, and professional body requirements — because he's delivered exactly this in a regulated, publicly listed environment with board-level accountability.

Whether you're exploring AI adoption across your practice, planning a pilot in a specific workflow, or thinking about how AI changes your delivery model and economics — let's talk about what makes sense for your firm.